Thread scheduling¶

Some data processing tasks in the HERD experiment, especially the simulation of high-energy heavy nuclear particles, require a significant amount of computing resources. Limited by the trend of modern processor development, the performance improvement of a single processor core is becoming increasingly slow. Therefore, applying parallel computing technology in software development to exploit the potential of multi-core processors has become a natural choice. To assist application software developers in developing efficient and reliable parallel computing applications, SNiPER implements highly encapsulated thread pool management, thread scheduling, and thread recycling functions based on the Intel TBB software tool.

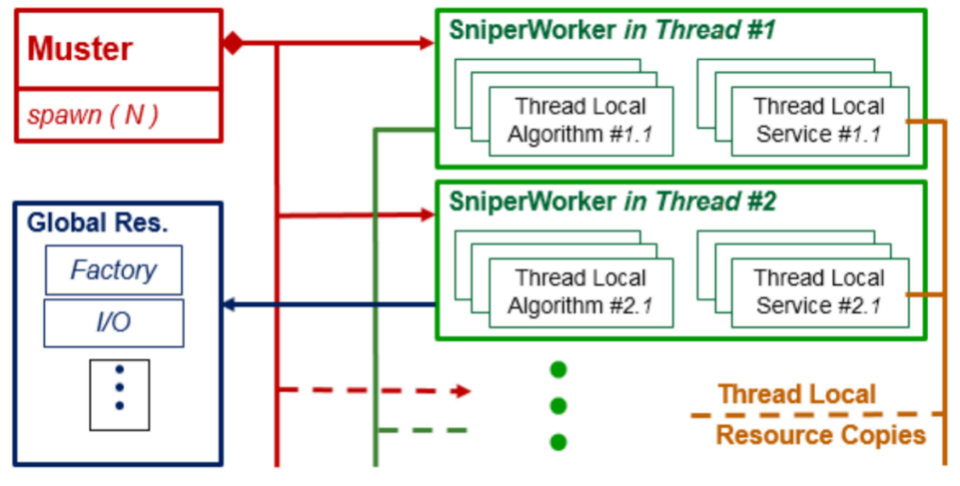

The following figure demonstrates the SNiPER scheme for running parallel computing applications. For each working thread, SNiPER creates a corresponding Task and copies an instance of the algorithm and service within that Task. During job execution, each working thread independently runs the event loop, achieving an event-parallel processing mode. All working threads are created and recycled by the Mustang (SNiPERˇŻs multi-task scheduler). In addition to algorithms and services that are exclusively used by a single thread, SNiPER also supports services that are shared by all threads, such as memory management services and data input/output services.

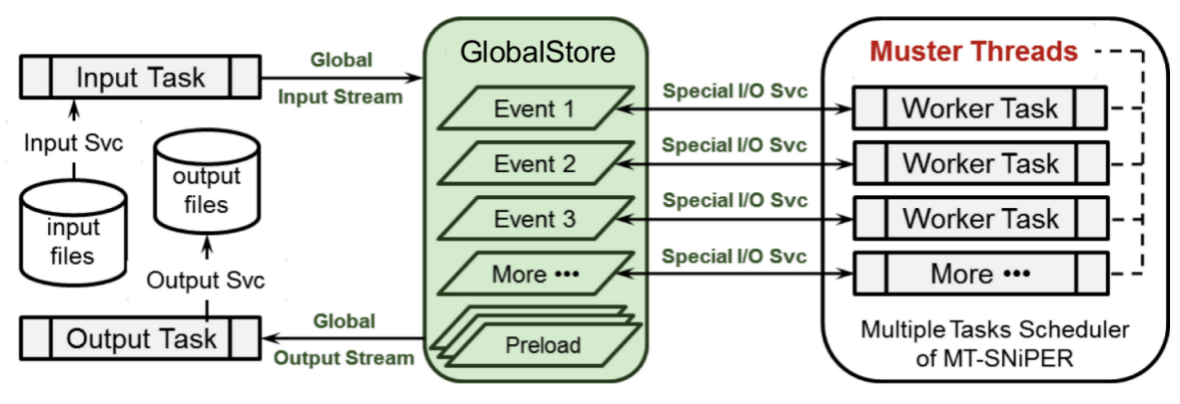

SNiPERˇŻs Muster, developed based on the thread scheduler provided by the TBB software, binds each Task created by it to a TBB Task (thread) to achieve the function of thread scheduling. In parallel SNiPER applications, since each working thread independently processes events, the serial event loop rule is broken. As shown in the following figure, during runtime, the Mustang is responsible for fetching data input from input files from a global memory pool (GlobalStore) and dispatching them to working threads; after completing the data processing tasks, the working threads deposit the processed data back into the memory pool and hand them over to the output service for writing to files. To ensure high data input/output performance and flexibility, SNiPER places the data input and output services in two separate threads. The input/output threads collaborate with the GlobalStore to implement a data management system that meets the requirements of parallel computing. The specific design plan will be discussed in the next section.